ML4Sci #16: Reflections on 6 months of ML4Sci; Photo Up-Sampling Algorithm Shows Racial Bias; US Bills on National AI Cloud and NSF Funding;

An "In the News" edition

Hi, I’m Charles Yang and I’m sharing (roughly) weekly issues about applications of artificial intelligence and machine learning to problems of interest for scientists and engineers.

If you enjoy reading ML4Sci, send us a ❤️. Or forward it to someone who you think might enjoy it!

As COVID-19 continues to spread, let’s all do our part to help protect those who are most vulnerable to this epidemic. Wash your hands frequently (maybe after reading this?), check in on someone (potentially virtually), and continue to practice social distancing.

It has been almost exactly 6 months since the inaugural issue of the ML4Ssci newsletter. The world we lived in was much different back then. The narrative around science, scientific communication, and AI lacked some of the newfound urgency it has today.

In an increasingly interconnected, technically complex, and AI-infused world, it is important to understand how AI is shaping the development of science and technology. As I’ve discussed previously, one core thesis of this newsletter is that the development of this intersection differs in important ways than that of “pure AI” or overall science. The challenges posed by AI, with the unique expertise required, its demanding hardware+software budget, and a research field distinctively dominated by tech companies, coupled with the unique experimental and epistemological motivations of science infuses this intersection with its own nuances. This newsletter is focused around uncovering these nuances and their implications for how we do science. Thanks for joining me on this journey as we navigate this new “endless frontier".

As always, please reach out at ml4science@gmail.com or on Twitter @charlesxjyang21 if you have thoughts about what you would like to see in this newsletter.

Some of my essays on ML4Sci that you might have missed:

Deep Learning in Science: published on Medium in 2019, this essay contains some of my earliest thoughts about deep learning for scientific problems that helped motivate me to start this newsletter.

ML4Sci #8: Defining the new AI-powered SaaS: Science as a Service

ML4Sci #12: Thoughts on COVID-19, Scientific Gatekeeping, and Substack Newsletters

I didn’t have time to write up coverage of different papers these past 2 weeks, so instead, I’ve put together an extended coverage of recent news and developments in both tech and science. Enjoy!

📰In the News

ML

“What I learned from looking at 200 machine learning tools”

Opportunities and Challenges in Explainable Artificial Intelligence (XAI): A Survey

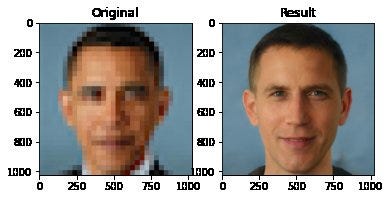

A new photo up-sampling algorithm published at the prestigious CVPR conference is shown to have significant racial biases. See below for a pixelated Obama being up-sampled into a white male with this algorithm.

A post from TheGradient gives a good overview of what happened and the ensuing twitter drama. As discussed in the post, there was quite a lot of controversy on Twitter over Yann LeCunn’s (NYU professor, Facebook Chief AI Scientist) comments about bias in AI systems. Lost in the tangle of Twitter threads? If only we had a forum for scientific debate that wasn’t Twitter 🤔 see ML4Sci #12, for some of my thoughts on what the solution might look like

White paper from the startup LightOn: massively parallel optical computing chips for AI

From Montreal AI Ethics Institute: the State of AI Ethics (June 2020)

CSET@Georgetown: Career Preferences of high-value AI Talent report. tldr; it’s the US, for now

OpenAI’s new GPT-3 writes Harry Potter fan fiction that is disturbingly realistic and closer to reaching the other side of the uncanny valley. More fictional writing from GPT-3 here

A 2016 blog post on why new datasets are more important than new algorithms. For an alternative perspective, check out Jeff Ding’s ChinAI translation of a debate on a Chinese forum about data vs. algorithms. Of course, the debate gains considerable nuance when placed in the context of ML4Sci. Ethically, generating large-scale scientific data is very different from labelling faces. Culturally, different scientific fields have different incentive structures around open-sourcing data than, say, silicon valley companies. Sometimes, these incentive structures actually discourage open-sourcing data, which can significantly limit innovation and reproducibility. Finally, many times, ML algorithms cannot be directly copied over to a scientific problem, but often need to be tweaked or altered to fit scientific constraints or encode scientific knowledge.

Science

AI for tracking Zebrafish [data]

Sign up for Physics Meets ML online seminars!

🧬Asymmetry of Weak Force might explain chirality in biological molecules [Quanta Magazine]

🌞 A piperidinium salt stabilizes efficient metal-halide perovskite solar cells. Perovskite solar cells are widely considered to be the next-generation of solar cells, that will be just as efficient, but orders of magnitude cheaper to produce than silicon-based solar cells. The limiting factor has always been their degradation in the presence of moisture, but this paper represents the latest improvements in perovskite humidity tolerance. I’ve been following perovskites for a while now (since 2016) but their rapid development and improvement has been astonishing to follow (really mirrors that of deep learning actually).

The Science of Science

☁️New Bicameral US Bill to develop a National AI cloud platform for researchers. This follows on the heels of the EU announcing a Gaia-X cloud platform

A Stanford study in Nature highlights how complex data workflows leads to reproducibility issues. [Stanford Blog]

“Do I hold onto this information, which by this point is pretty clear-cut, or do I inform the world? The answer is: You’re cursed if you do and cursed if you don’t. But by far, to me, the better option was to make the results publicly available.”

🌎Out in the World of Tech

Wrongfully arrested by a facial recognition algorithm [NYT]

Quora becomes a remote-first company

MIT Tech Review: Trump’s freeze on new visas could threaten US dominance in AI

Thanks for Reading!

I hope you’re as excited as I am about the future of machine learning for solving exciting problems in science. You can find the archive of all past issues here and click here to subscribe to the newsletter.

Have any questions, feedback, or suggestions for articles? Contact me at ml4science@gmail.com or on Twitter @charlesxjyang